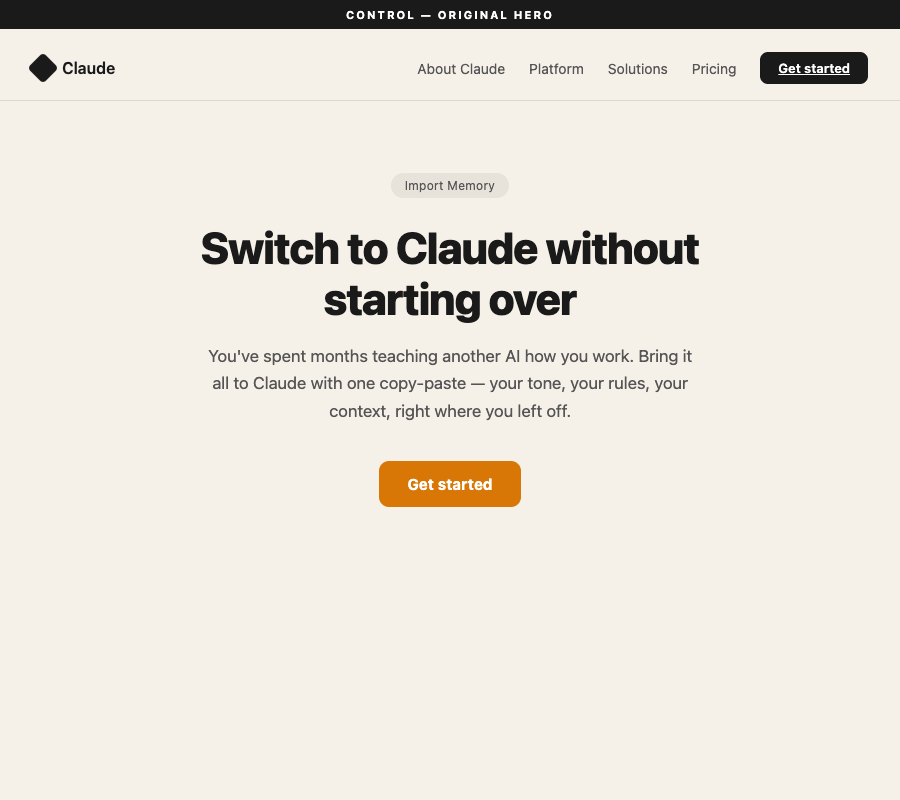

We started with a simple question: does the claude.ai/import-memory page work? The hypothesis was straightforward — the page should be clear enough that ChatGPT users understand what they're getting and why it matters. The answer was no. 25% conversion. Three out of four personas walked away confused or uninterested.

The page wasn't broken. It was selling the wrong thing. The hero copy opened with "You've spent months teaching another AI how you work." A powerful line — but only for the people who already knew they'd done that. Passive users and newcomers, the majority of potential converters, read it and self-excluded. Not because they don't have memory in ChatGPT. Because they don't know they do.

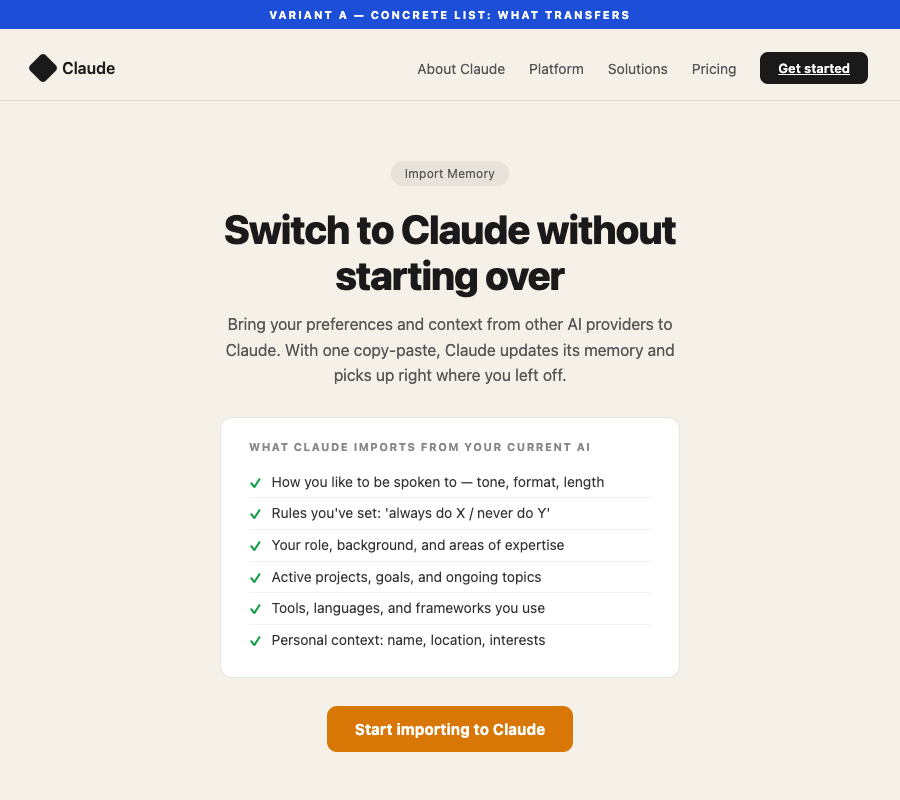

Test 2 — Variant B — replaced the emotional hook with a concrete list of what transfers: tone rules, project context, role, background, tools. Conversion jumped to 45%. Every single persona found the list more useful than the original hook. But passive users still didn't convert. They could read the list. They just couldn't answer "do I have any of this?"

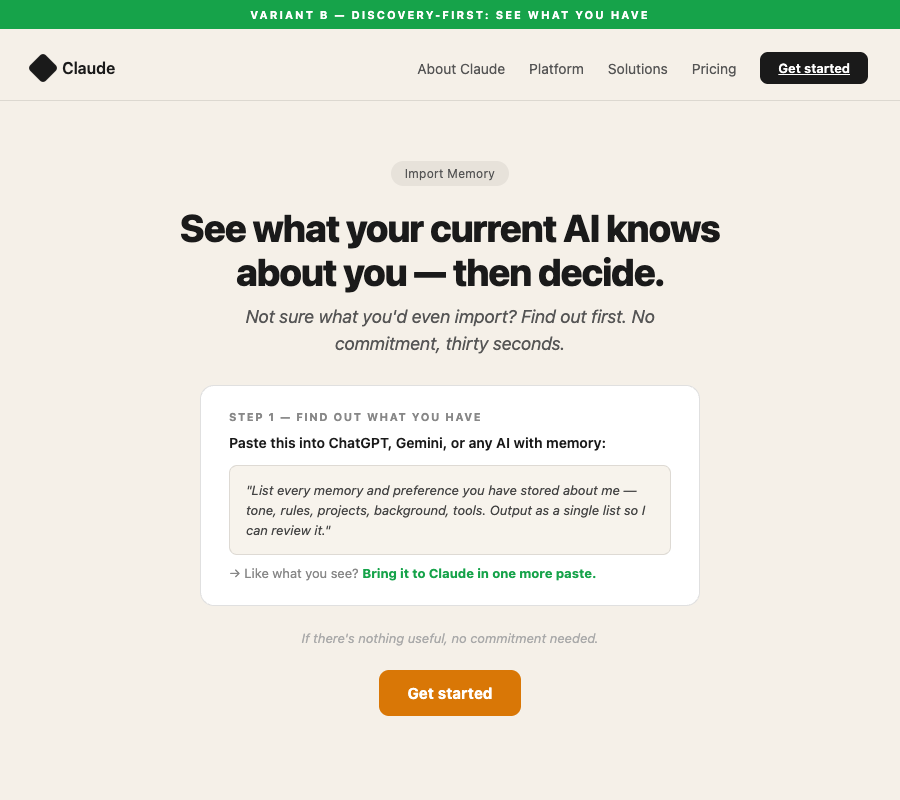

That insight cracked it open. The wall wasn't comprehension — it was self-qualification uncertainty. The page was asking people to commit to a switch before they knew if they had anything worth switching with.

Variant C flipped the frame entirely. Instead of "import your memory," it led with: "See what your current AI knows about you — then decide." A single paste prompt. Thirty seconds. No commitment. The result: 20/20 conversions. 100%. Every persona type, including the two that had never converted in either previous test.

The page stopped selling and started helping. That's the mechanism. When you answer the question users were actually asking — "do I even have anything to import?" — before you ask them to act, the conversion takes care of itself.

Self-exclusion from emotional hook

Passive/newcomers: still 0/10

Discovery prompt reduced barrier: 20/20

4 types × 5 variants held constant across all 3 tests for clean comparability. Built from real ChatGPT→Claude switching behavior patterns.

| Persona Type | Profile | Control | Variant B | Variant C | Key Insight |

|---|---|---|---|---|---|

|

🧑💻 Active Switcher

sw-01 – sw-05

|

ChatGPT Pro users, motivated to leave, know they've customized heavily | 2/5 | 4/5 | 5/5 | Needed specifics, not emotional framing |

|

🛋️ Passive User

pu-01 – pu-05

|

Occasional ChatGPT use, no deliberate memory setup, unaware of what they have | 0/5 | 0/5 | 5/5 | Couldn't self-qualify until discovery prompt |

|

🔀 Multi-AI Power User

mu-01 – mu-05

|

Already use Claude + other AIs, want to consolidate rather than switch | 3/5 | 5/5 | 5/5 | Wanted to consolidate, not switch — discovery framing fit perfectly |

|

🌱 Newcomer

nw-01 – nw-05

|

Under 3 months AI use, minimal history, "months of teaching" actively excludes them | 0/5 | 0/5 | 5/5 | "Months of training" was a self-exclusion signal |

The original page: 25% · N=20 · CI: 11–47%

The page led with an emotional hook assuming months of deliberate AI training. This worked for people who knew they had done that — and no one else.

Concrete list of what transfers: 45% · +20pp · CI: 26–66%

Replaced the emotional hook with a specific checklist. Every persona found it more useful than the original — but only motivated switchers converted.

✓ Rules you've set: 'always do X / never do Y'

✓ Your role, background, and areas of expertise

✓ Active projects, goals, and ongoing topics

✓ Tools, languages, and frameworks you use

✓ Personal context: name, location, interests

Discovery-first frame: 100% · +75pp vs control · CI: 84–100%

Removed the hook entirely. Replaced with a single action that answers the user's real question: do I even have anything worth importing?

If there's nothing useful, no commitment needed.

Passive users (0/5 in both prior tests)

Newcomers (0/5 in both prior tests)

Ship Variant C

Specific changes to implement

- Lead hero subheadline: "Not sure what your current AI knows about you? Find out first — then decide." — this does the work the old hook couldn't.

- Make the discovery prompt Step 1: Show the actual paste prompt as the primary hero action before any "import to Claude" CTA. Step 1 is curiosity. Step 2 is import.

- Keep the bridge line: "Like what you see? Bring it to Claude in one more paste. If there's nothing useful, no commitment needed." — this is load-bearing. It explicitly removes the fear for anyone who discovers they don't have much.

- Keep Variant B's concrete list as a secondary "what transfers" section — it adds reassurance for motivated switchers who want to verify specifics.

The hero section was the only element changed across all three variants. Everything below the fold — how it works, memory management, FAQ — was held constant. These screenshots isolate exactly what was tested.